Data for Everyone

Powering the world's best data teams

The MOSTLY AI Data Intelligence Platform

Unlock the power of data

Access and work with production data securely, generate high-quality, privacy-safe synthetic data, and seamlessly analyze and share data across teams. With agentic data science at its core, the Platform enables organizations to accelerate AI innovation, streamline workflows, and drive smarter decision-making at scale.

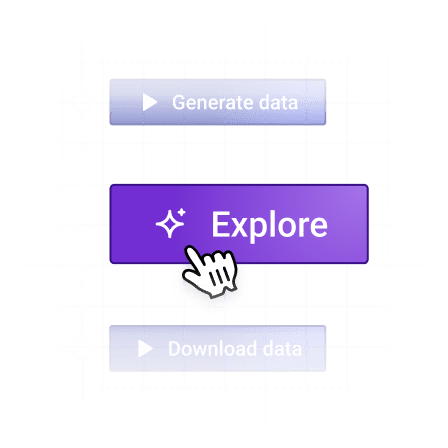

Real-World Data

Surface insights from live production data sourced directly from enterprise systems or data platforms such as Databricks. Analyze patterns, monitor performance, and track trends to reflect real operational conditions and support informed decision-making across teams.

Mock Data

Generate realistic structured and text-based data for safe experimentation, prototyping, and testing. Ideal for staging environments, demos, or scenarios where access to production data is limited or restricted.

Synthetic Data

Create high-fidelity, privacy-safe datasets that mimic real data without exposing sensitive information. Enables safe collaboration, model training and data sharing across teams or partners.

Simulated Data

Model edge cases, future scenarios, and what-if conditions using controlled data simulation. Simulations are based on real or synthetic data and are ideal for stress testing strategies, validating assumptions, and testing algorithm performance in a safe environment.

Agentic. Secure.

For everyone.

Built for Everyone. Individuals, teams, and Enterprise organizations. Connect to your data within your secure environment. Run on your compute. Gain insights from your production data with the AI Assistant. Leverage synthetic data to broaden data access across your whole organisation.

AI-powered insights

Use simple natural language, to create and run Python code and analyze your data.

Teamwork made easy

Organize, manage, and collaborate on shared assets with your team.

Enterprise-ready

Scalable, secure deployment on Kubernetes or OpenShift

Share data globally

Create privacy-safe synthetic data and share it with the world.

Simple & powerful

An easy-to-use platform for everyone, from beginner to expert.

Built for AI

Accelerate your AI workloads by creating the data your teams need.

The Synthetic Data SDK

Get started instantly

Powered by the industry leading TabularARGN model architecture, generate high-fidelity synthetic data with built-in differential privacy, 100x faster training, advanced sampling, and support for complex tabular and textual datasets.

A fully permissive Open Source project under an Apache v2 license.

A fully permissive Open Source project under an Apache v2 license.

!pip install -U mostlyai

# initialize the SDK

from mostlyai.sdk import MostlyAI

mostly = MostlyAI()

# train a generator

g = mostly.train(data="/path/to/data")

# inspect generator quality

g.reports(display=True)

# generate any number of new privacy-safe samples

mostly.probe(g, size=1_000_000)

# generate new synthetic samples to your needs

mostly.probe(g, seed=[{'age': 65, 'gender': 'male'}])

# export and share your generator

g.export_to_file()Copied

Your data never leaves your environment

Create synthetic data locally in your Python environment - you stay in full control of your data.

Seamless integration

Export your Generators and upload them to the MOSTLY AI Data Intelligence Platform for exploration and sharing.

Customer and Partner Testimonials

See how leading organizations are transforming their data strategies with synthetic data solutions

Swiss Post

Synthetic Switzerland" Unlocks 89% more Customer Data

“Using the Synthetic Data SDK with our whole customer base, we can increase access from 11% to 100% with a synthetic customer base that mirrors the original dataset but with 100% privacy.”

— Sanzio Monti

Data Scientist, Swiss Post

Watch video

Erste Group

Synthetic Data Accelerates Model Development

“Synthetic data is used in every (Databricks) environment except Production... due to very limited data in UAT and PHAT. We’re not allowed to test on production data.”

— Vukola Milenkovic

Databricks Solution Manager, Erste Group

Watch video

AWS

Synthetic Data Accelerates Customer’s Cloud Migration

“A fundamental shift in how organizations are appreciating the ability of synthetic data to release all these data silos... to unlock the value of their data today instead of waiting for years.”

— Faris Haddad

AI Strategy Lead, AWS

Watch video

Databricks

Leveraging Synthetic Data in Clean Rooms to Unlock Cross-Industry Intelligence

“That’s where I think synthetic data really comes into the picture - where you're able to take a valuable data asset and then generate a synthetic data asset that essentially mimics that data itself... whether directly via Delta Sharing to your partner or in a Clean Room setting.”

— Nikhil Gaekwad

Product Lead, Databricks

Watch video