TL;DR The broad adoption of connected devices equipped with GPS sensors adds geo context to nearly all customer data assets. However, location data—in particular, location traces—are nearly impossible to anonymize with legacy techniques as they allow for easy re-identification. The latest release of MOSTLY GENERATE ships with geo data support, thus allowing any organization to synthesize and truly anonymize their geo enriched data assets at scale.

The rise of geo data

Every phone knows its own location. And every watch, car, bicycle, and connected device will soon know its own location too. This creates a huge trove of geospatial data, enabling smart, context-aware services as well as increasing location intelligence for better planning and decision-making across all industries. Footprint data, a valuable asset of telecommunications companies, is a sought-after data type helping businesses and governments optimize urban services and find the best locations for facilities. This geospatial data can help fight pandemics, allowing governments and health experts to relate regional spread to other sociodemographic attributes. Financial institutions and insurance companies can improve their risk assessment. For example, home insurance prices can be improved through the mapping of climate features. The list of geo data use cases is long already but likely to get longer the more we continue tracking locations.

Yet, all of these devices are used by people. Thus, that data more often than not represents highly sensitive personal data—i.e., private information that is to be protected. It's not the movements of the phones but the movements of the people using the phones that are being tracked. That's where modern-day privacy regulations come into play and impose restrictions for what kind of data may be utilized and how such data may be utilized. In addition, these regulations are accompanied by significant fines to ensure that the rules are being adhered to.

These privacy regulations indisputably state that the sheer masking of direct identifiers (like names or e-mail addresses) does NOT render your data assets anonymous if the remaining attributes still allow for re-identification. For geo data, which yields a characteristic digital fingerprint for each and every one of us, the process of re-identification can be as simple as a mere database lookup. Montjoye et al. have demonstrated in their seminal 2013 Nature article that two coarse spatio-temporal data points are all that it takes to re-identify over half of the population. But more importantly, the authors demonstrate that further coarsening the data provides little to no help if multiple locations are being captured per individual, a finding that exposes a fundamental constraint to legacy data anonymization techniques.

For that reason, many of the public data-sharing initiatives, which started out with the best of intentions to foster data-driven innovation, had to stop their activities related to geo data. See the following note regarding Austin's shared mobility services, which ceased their granular-level data sharing in 2019, when the privacy implications were brought to their attention:

** Note About Location Data and Privacy (Apr 12, 2019) **

After discussion with colleagues and industry experts, we have decided to remove the latitude and longitude data from our public shared micromobility trips dataset in order to protect user privacy. [...] There is no consensus from the community on how best to share this kind of location data [...]

So, Austin, and other smart cities alike, look no further—we've developed the right solution for you.

Geo support within MOSTLY AI 1.5

At MOSTLY AI, we've been dedicated to solving the long-standing challenge of anonymization with AI-based synthetic data ever since our foundation. And, geo data, despite or because of its high demands, has been a focal part of our research activities. In particular, as we increasingly encountered this data type residing within nearly any enterprise data landscape across a broad range of industries.

Thus, fast forward to 2021, we are filled with joy and pride to finally announce that our industry-leading synthetic data platform, now ships with direct geo data support. So, aside from categorical, numeric, temporal, and textual data attributes, users can now also explicitly declare an arbitrary number of attributes to contain geo coordinates. The synthesized dataset will then faithfully represent the original data asset, with statistical relationships between the geo and non-geo attributes all being retained.

Internally, our patent-pending technique provides an efficient representation of geo information that adaptively scales its granularity to the provided dataset. This allows the generated synthetic data to represent regional just as well as local characteristics, all happening in a fully automated fashion.

Case study for synthetic geo positions

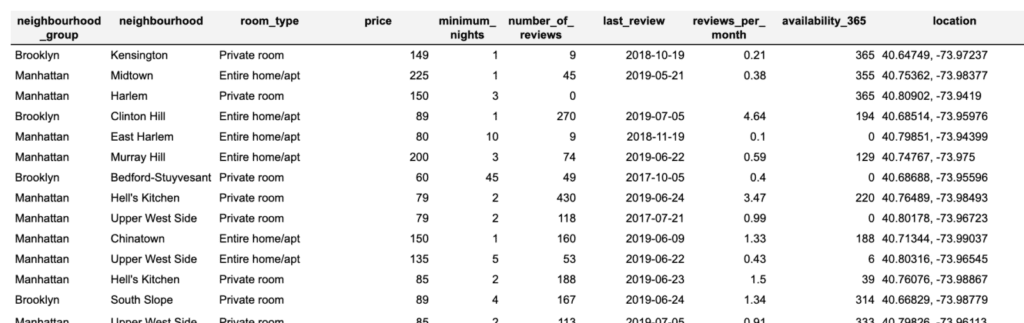

For the purpose of demonstration, let's start out with a basic example on top of 2019 Airbnb listings for New York City. That dataset consists of close to 50,000 records, with 10 measures each, whereas one of them represents the listing location encoded as latitude/longitude coordinates. While this dataset is rather small in terms of its shape and size when compared to typical customer datasets, it still shall provide us a good first understanding of the newly added geo support.

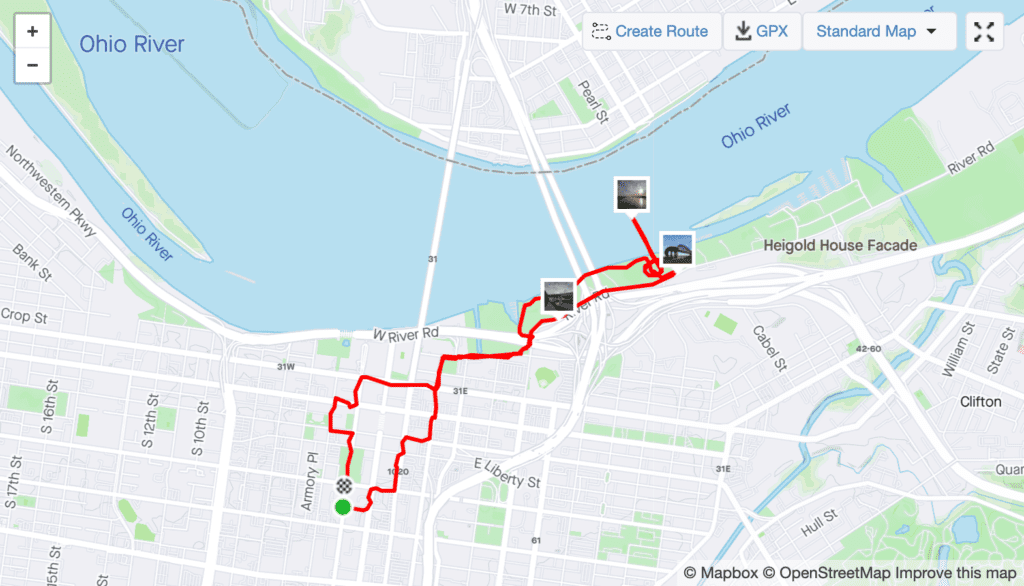

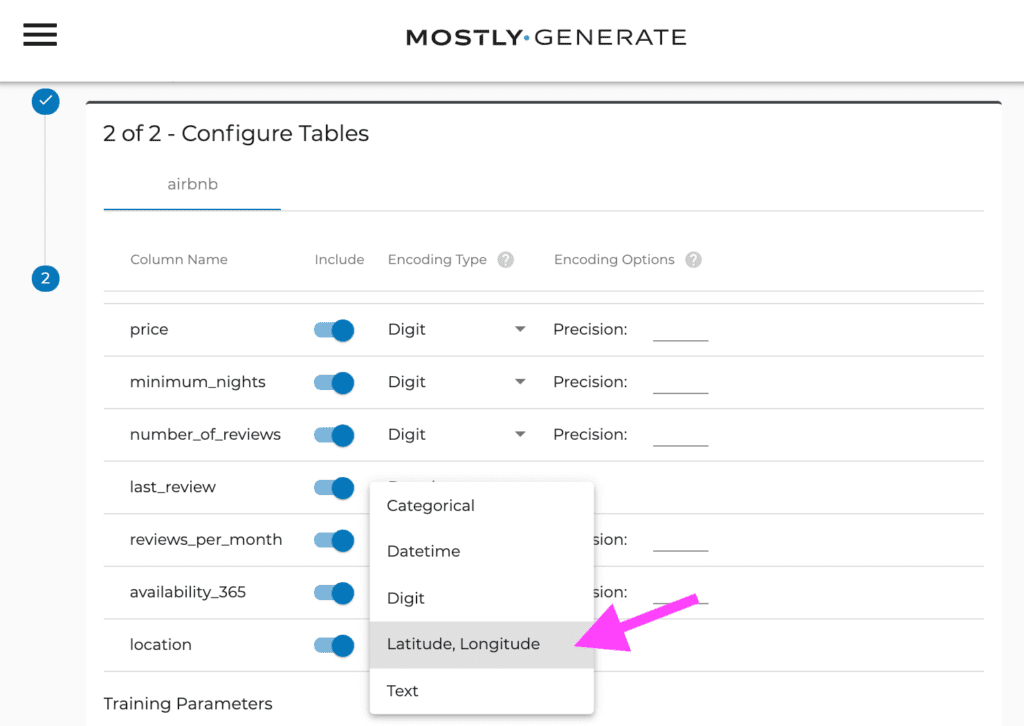

Synthesizing a geo-enriched dataset is as simple as synthesizing any other dataset thanks to MOSTLY AI's easy-to-use user interface. One simply needs to provide the dataset (in this case, we uploaded the dataset as a CSV file) to then inform the system about the geo-encoded attribute. All that is left to do is trigger the synthesis process, which then executes the encoding, the training, and the generation stages. Once the job is completed, users of the platform can then download the synthesized dataset, as well as a corresponding quality assurance report.

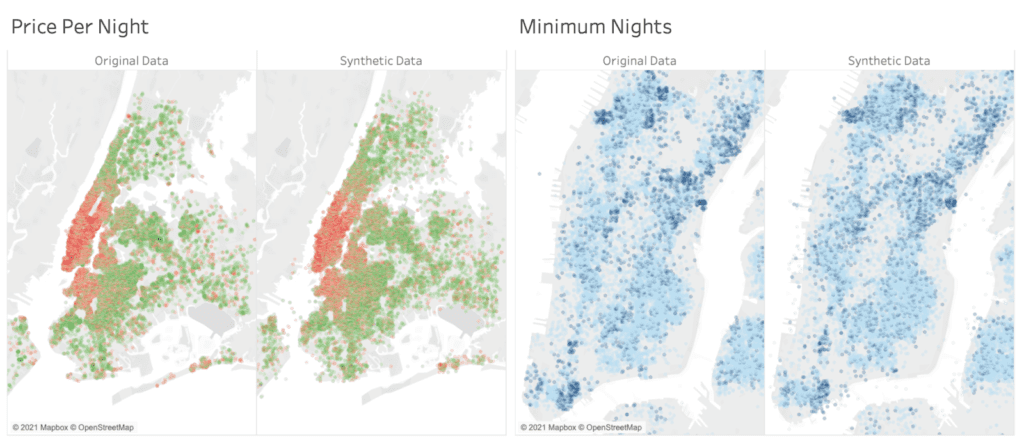

A quick check shows that the basic statistics are well retained. For example, the average price per night is ~$212 for an entire home, compared to ~$70 for a shared room. The average minimum stay is ~8.5 nights in Manhattan vs. 5 nights in Queens. All these are perfectly reflected within the synthetic data. With the focus of this article being on the geo properties, we continue our analysis leveraging Tableau, a popular data visualization solution. Like any other analytical tool, it can process synthetic data in exactly the same way as the original data. However, any analysis on the synthetic data will be private by design, even though it operates on granular-level data.

Figure 4 provides a side-by-side comparison of the overall geo distribution of listings, where these are color-coded either corresponding to their listing price (red values represent high prices) or corresponding to their required minimum stay (dark blue represent longer stays). As can be seen, the distinct relationship between location and price is just as well retained as the relationship between location and minimum nights of stay. One can publicly share the synthetic geo data to allow for similar insights as with the original data, but without running the risk of exposing an individual's privacy.

Case study for synthetic geo traces

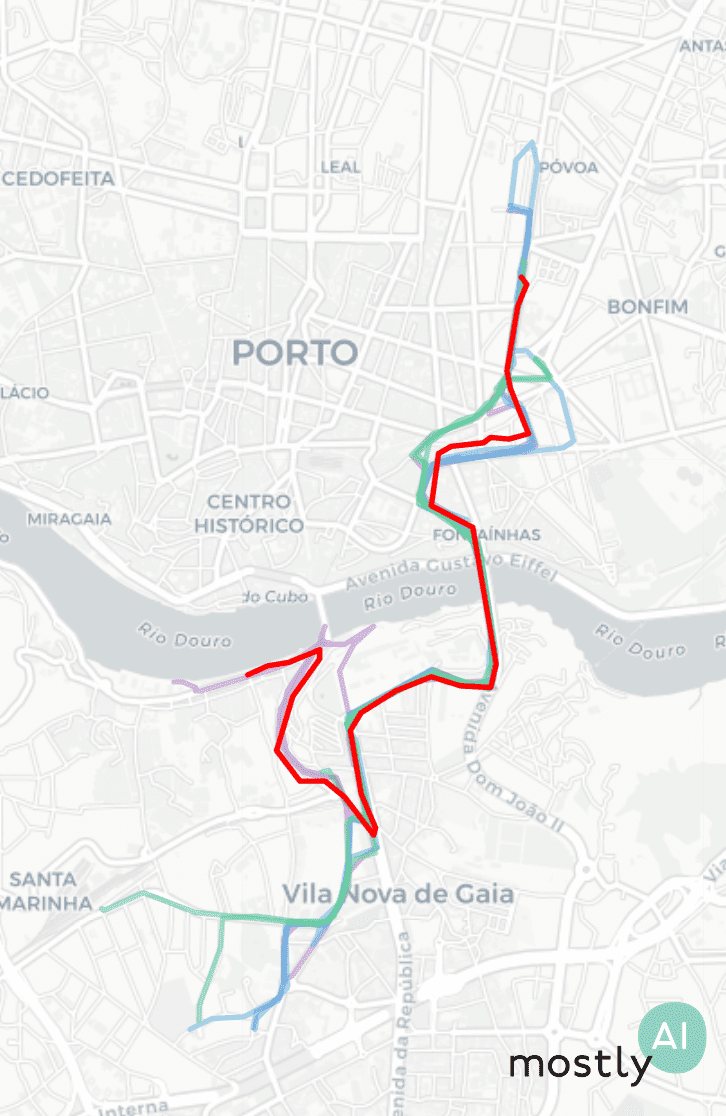

As a second demonstration, we will turn to the Porto Taxi dataset. It consists of over a million taxi trips, together with their detailed geo location captured at 15-second intervals. Thus, depending on the overall duration of the trip, we see a varying sequence length of recorded geo locations. The total amount of available data provides plenty of opportunities for the generative model to learn and retain detailed level characteristics of the dataset, while the general ease of use remains unchanged.

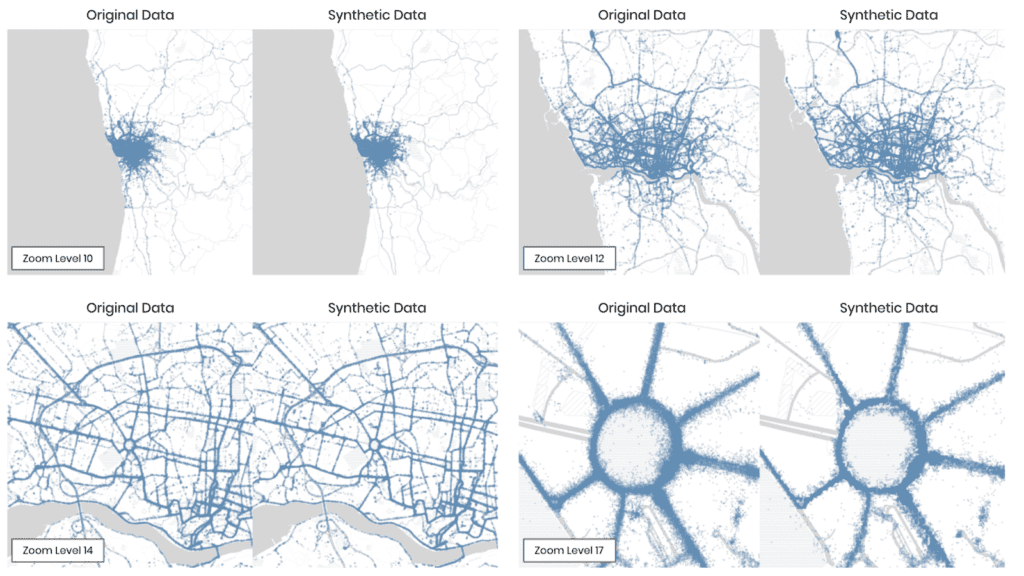

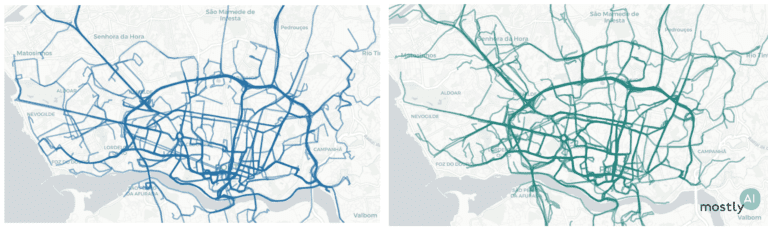

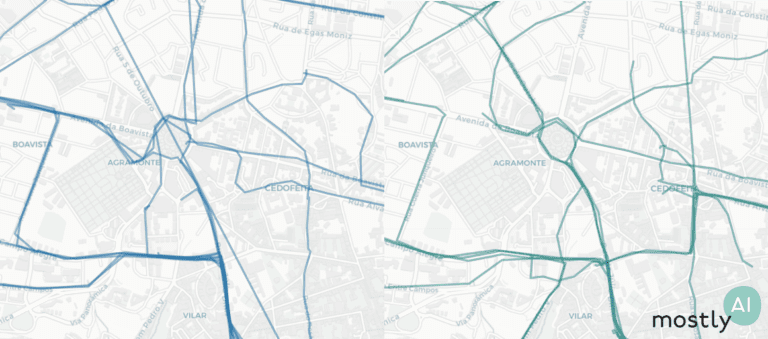

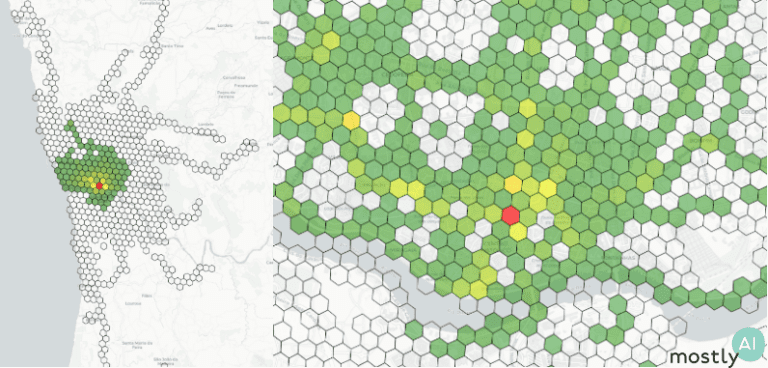

Figure 5 already visualizes the results side by side; i.e., both the recorded original and the generated synthetic taxi locations, showcasing the great out-of-the-box detail and adaptive resolution of MOSTLY AI's synthetic data platform. As one can see, even though each and every taxi trip has been generated from scratch, the emerging traffic patterns are identical at the city, district, and even block levels (see the roundabout zoomed in to focus on the bottom right corner of Figure 5).

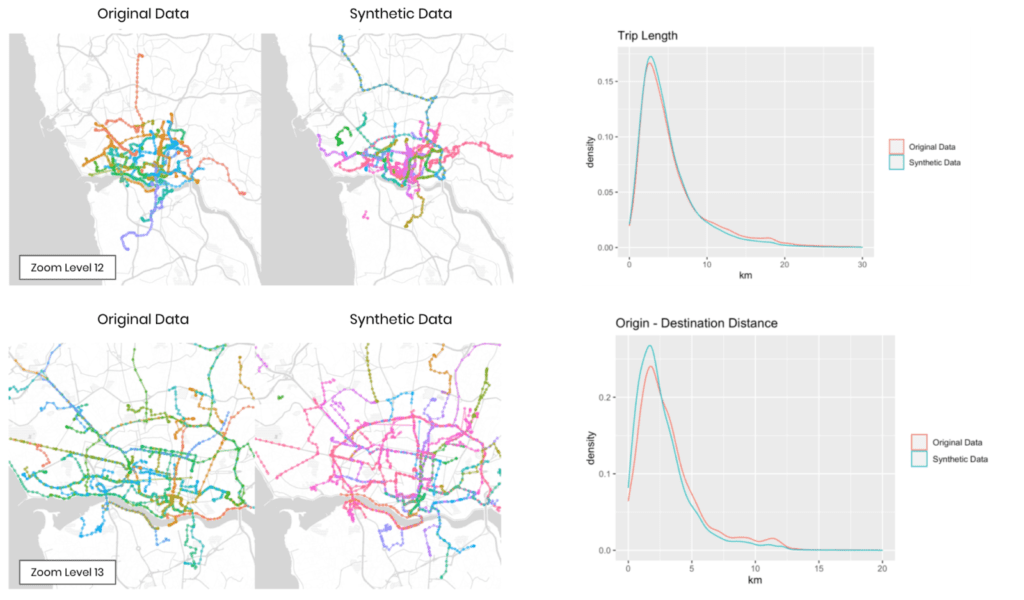

Further, Figure 6 shows randomly selected taxi trips as well as trip level statistics. All of these plots clearly show that not only the location but also the consistency of trips is faithfully represented. Synthetic trip trajectories remain coherent and do not erratically jump from one location to another. This thus yields a near-perfect representation of overall trip length, as well as of the distance between the trip origin and its destination. Note that the quality of the synthetic trips can be easily further improved, as we've only trained on ~10% of the original data and restrained from any dataset-specific parameter tuning.

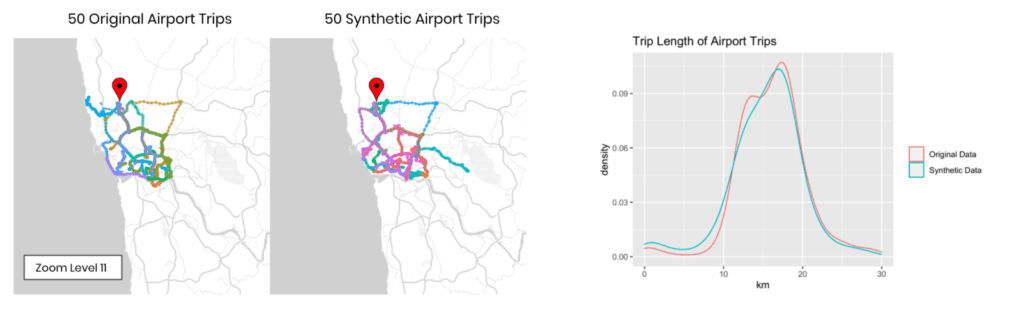

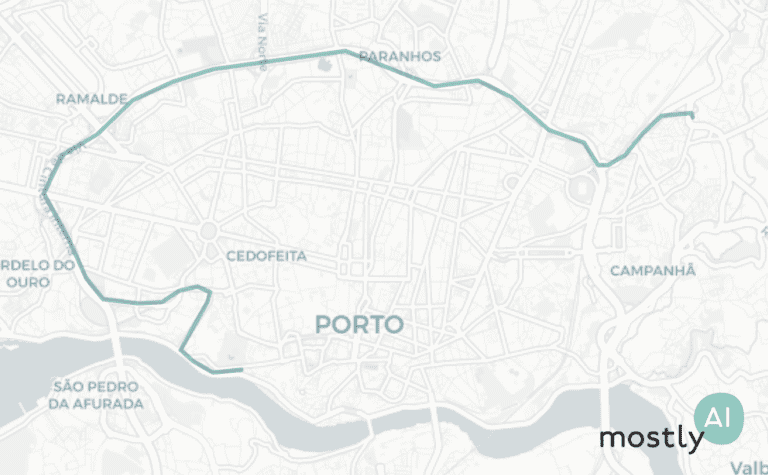

Finally, Figure 7 depicts each 50 randomly selected taxi trips to the International Airport of Porto. Once more, we see both the spatial distribution and the overall increased length of airport trips well reflected within the synthetic trips.

The future of geo data sharing is bright

Precise geolocation information is considered to be one of the hardest things to anonymize. This hampers many customer data assets that contain geographic references to be easily shared and utilized across teams. But with the value of customer trust and the value of customer data being increasingly recognized, we are thrilled to deliver the presented geo support within MOSTLY AI 1.5. It will provide you with truly anonymous yet highly accurate representations of your data assets and will help you on your mission to reduce wasteful operations and build context-aware smarter services. Thanks to AI-powered synthetic data, the future of open sharing of geo data is becoming bright again.

Acknowledgment

This research and development on synthetic mobility data is supported by a grant of the Vienna Business Agency (Wirtschaftsagentur Wien), a fund of the City of Vienna.

We had such a busy start to 2021! Our developers worked hard to deliver much anticipated new features to simplify our customers' lives with faster, safer, and easier processes. A serious legal assessment was underway, while the MOSTLY AI team also made the SOC 2 certification happen. Microsoft, Telefónica, the City of Vienna, and many others have been using our synthetic data generation platform to make the most of their data assets, with Erste Group signing a 3-year partnership last month. An important piece of research was also born, proving that synthetic data for Explainable AI will be an important use case.

The feedback we have received so far makes it abundantly clear that AI-generated synthetic data is the way to go for large organizations looking to step up their data game. And the new version of our category-leading synthetic data generator, MOSTLY AI 1.5 is the tool that provides the level of maturity, usability, and data quality that is crucial to scale synthetic data in an organization.

Legal support for synthetic data is part of the product upgrade

Privacy protection and data security have a special place in our hearts. We take this very seriously, and completing the SOC 2 certification is a very meaningful step for the team, reinforcing all that we stand for. SOC 2 assures our customers that we follow consistent security practices and that we are able to keep their valuable data always safe and protected through the implementation of standardized controls.

Another important way in which we support our customers' legal teams is by providing a Data Protection Impact Assessment (DPIA) blueprint for MOSTLY AI's synthetic data platform. This document, created in collaboration with the reputable law firm, Taylor Wessing will allow legal teams to demonstrate compliance to regulators easily.

Work faster and synthesize data easier

You can now use the Data Catalog to enable carefree automation of synthetic data pipelines and store links to data sources together with their configuration settings. Synthesis is now a one-click job.

Using the REST API, you can create fully automated synthetic data pipelines. You can easily integrate MOSTLY AI's synthetic data platform with upstream ETL applications and downstream post-processing tools.

GPU accelerated synthetic data is like synthetic data with wings. Using the brand new GPU training option, you can now synthesize your sequential datasets in considerably less time, without any impact on synthetic data quality or privacy.

MOSTLY AI 1.5 now natively supports Parquet files, enabling faster time-to-data, as converting to CSV is no longer necessary. From now on, you can save your encoding configurations as a JSON file and use your own tooling to generate configuration settings for datasets with a large number of columns.

Now there is also a turbo button for synthetic data generation: you can now choose to optimize model training for speed. It's really fast and the resulting synthetic data is only a little less accurate. Great for use cases where speed is of utmost importance, but accuracy isn’t paramount, like creating realistic data for testing.

Stay safe with added synthetic data controls

MOSTLY AI’s new User Management system allows you to securely control user access to data, run job details, and synthetic data generation features. Onboarding and offboarding employees is now a breeze. Users can log in using their Active Directory credentials.

You can now use stochastic rare category protection thresholds for categorical variables, which randomizes the decision of whether to include or exclude categories whose frequency in the data is very close to the inclusion threshold. This makes it now impossible to infer even the parameters of the rare category protection, adding an additional layer of protection for outliers and extreme values.

The consistency correction feature helps generate consistent historical sequences for your synthetic subjects when there is a large variety of values. Users can enable consistency correction per categorical column in their event table, and Admins can configure in the Global run settings whether Users can work with this feature.

A new encoding type: synthetic geolocation data

Due to popular demand, we are now supporting the synthesis of geolocation data with latitude and longitude encoding types. It's time to get those footprint datasets ready to work for you in a privacy-preserving way!

We would love to hear your feedback! If you are using MOSTLY AI 1.5, please let us know what you think, as we continuously strive to build an even better product for you. If you are not yet our customer but are curious to find out how our synthetic data platform can increase the ROI of your data projects, contact us for a personalized demo!

And is it really possible to securely anonymize the location data that is currently being shared to combat the spread of COVID-19?

To answer these and more questions, SOSA’s Global Cyber Center (GGC) invited our CEO Michael Platzer to join them on their Cyber Insights podcast for an interview. For those of you, who don’t know SOSA: it’s a leading global innovation platform that helps corporates and governments alike to build and scale their open innovation efforts. What follows is a transcript of the podcast episode.

William: Wonderful, now Micheal, when you think about the broad array of cybersecurity trends that are unfolding today – ranging from new threats to new regulations – what is really top of mind for you in 2020?

Michael: Thanks for having me! We are MOSTLY AI and we are a deep-tech startup founded here in Europe while preparing for GDPR. Very early on, we had this realization that synthetic data will offer a fundamentally new approach to data anonymization. The idea is quite simple. Rather than aggregating, masking or obfuscating existing data, you would allow the machine to generate new data or fake data. But we rather prefer to say “AI-generated synthetic data”. And the benefit is, that you can retain all the statistical information of the original data, but you break the 1:1 relationship to the original individuals. So you cannot re-identify anymore – and thus it’s not personal data anymore, it’s not subject to privacy regulations anymore. So you are really free to innovate and to collaborate on this data – but without putting your customers’ privacy at risk. It’s really a fundamental game-changer that requires quite a heavy lifting on the AI-engineering side. But we are proud to have an excellent team here and to really see that the need for our product is growing fast.

William: Very interesting! Now, we know that location data is among our most accessible PII – we kind of give it out all the time via our mobile device. In the wake of the coronavirus, we are seeing calls to use our location data to track the spread of this pandemic. Is it possible to really effectively anonymize and secure our location data? Or can this data just be reverse engineered? Could using synthetic data help?

Michael: Yes definitely, and we are also engaging with decision-makers at this moment in this crisis. Location data is incredibly difficult to anonymize. There have been enough studies that show how easy it is to re-identify location traces. So what organizations end up with is only sharing highly aggregated count statistics. For example, how many people are at which time at which location. But you lose the dimension at the individual level. And this is so important if you want to figure out what type of socio-demographic segments are adapting to these new social distancing measures, and for how long they do that. And is it 100% of the population that’s adapting, are social contacts reducing by 60% or is it maybe a tiny fragment of segments that is still spreading the virus? To get to this kind of level to intelligence you need to work at a granular level. So not on an aggregated level, but on a granular level. Synthetic data allows you to retain the information on a granular level but break the tie to us individually. We just, coincidentally, in February wrote a blogpost on synthetic location traces – so before the corona crisis started – because we were researching this for the last year. It’s on our company blog and I can only invite people to read it. Super exciting new opportunities now to anonymize location traces!

William: That is exciting – and it sounds as if it could be very helpful, especially given what we are all going through! Now, Micheal, there is an expanding list of techniques to protect data today; from encryption schemes, tokenization, anonymization, etc. Should CISOs look at the landscape as a “grocery shelf” with ingredients to be selected and combined or should they search for one technique to rule them all?

Michael: Well, I don’t believe that there is a one-size-fits-all solution out there. And those different solutions really serve different purposes. It’s important to understand that encryption allows you to safely share data with people that you trust – or you think that you trust. Whether that’s people or machines, at the end, there is someone sitting who is decrypting the data and then has access to the full data. And you hope that you can trust the person. Now, synthetic data allows you to share data with people where you don’t necessarily need to rely on trust, because you have controlled for the risk of a privacy leak. It’s still super valuable, highly relevant information. It contains your business secrets, it contains all the structure and correlations that are available to run your analytics, to train your machine learning algorithms. But you have zeroed out your privacy risk! In that sense, synthetic data and encryption serve two different purposes. So every CISO needs to see what their particular challenge and problem is that needs to be overcome.

William: Well Michael, we’re coming up on our time here. Are there any concluding remarks or anything you would like to add before we hang up?

Michael: Well, we just closed our financing round so we’re set for further growth both in Europe as well as the US. We’re excited about the growing demand for data anonymization solutions, also for our solution. Happy to collaborate with innovative companies, who take privacy seriously. And of course, I wish everyone best of health and that we get – also as a global community – just stronger out of the current crisis.

It’s 2020, and I’m reading a 10-year-old report by the Electronic Frontier Foundation about location privacy that is more relevant than ever. Seeing how prevalent the bulk collection of location data would become, the authors discussed the possible threats to our privacy as well as solutions that would limit this unrestricted collection while still allowing to reap the benefits of GPS enabled devices.

Some may have the opinion that companies and governments should stop collecting sensitive and personal information altogether. But that is unlikely to happen and a lot of data is already in the system, changing hands, getting processed, and analyzed over and over again. This can be quite unnerving but most of us do enjoy the benefits of this information sharing like receiving tips on short-cuts through the city during the morning rush hour. So how can we protect the individual’s rights and make responsible use of location data?

Until very recently, the main approach was to anonymize these data sets: hide rare features, add noise, aggregate exact locations into rough regions or publish only summary statistics (for a great technical but still accessible overview, I can recommend this survey). Cryptography also offers tools to keep most of the sensitive information on-device and only transmit codes that compress use-case relevant information. However, these techniques make a big trade-off between privacy, accuracy, and utility of the modified data. Even after this preprocessing, if the original data retains any of its utility then the risk of successfully re-identifying an individual is extremely high.

D.N.A. is probably the only thing that’s harder to anonymize than precise geolocation information.

Time and again, we see how subpar anonymization can lead to high-profile privacy leaks and this is not surprising, especially for mobility data: in a paper published in Scientific Reports, researchers showed that 95% of the population can be uniquely identified just from four time-location points in a data set with hourly records and spatial resolution given by the carrier’s antenna. In 2014, the publication of supposedly safe pseudonymized taxi trips allowed data scientists to find where celebrities like Bradley Cooper or Jessica Alba were heading by querying the data based on publicly available photos. Last year, a series of articles in the New York Times highlighted this issue again: from a data set with anonymized user IDs, the journalists captured the homes and movements of US government officials and easily re-identified and tracked even the president.

Mostly AI’s solution for privacy-preserving data publishing is to go synthetic. We develop AI-based methods for modeling complex data sets and then generate statistically representative artificial records. The synthetic data contains only made-up individuals and can be used for test and development, analysis, AI training, or any downstream tasks really. Below, I will explain this process and showcase our work on a real-world mobility data set.

The Difficulties With Mobility Data

What is a mobility data set in the first place? In its simplest form, we might be talking about single locations only where a record is a pair of latitude and longitude coordinates. In most situations though, we have trips or trajectories which are sequences of locations.

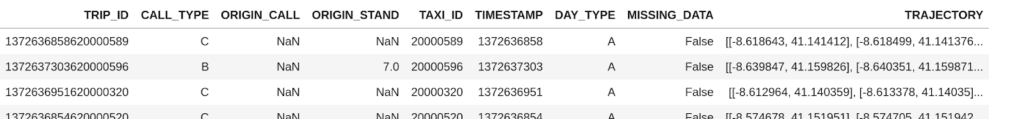

The trips are often tagged by a user ID and the records can include timestamps, device information, and various use-case specific attributes.

The aim of our new Mobility Engine is to capture and reproduce the intricate relationship between these attributes. But there are numerous issues that make mobility data hard to model.

- Sparsity: a fixed latitude/longitude pair from the data set appears once or a few times, especially at high granularity records.

- Noise: GPS recordings can include a fair amount of noise so even people traveling the exact same route can have quite different trajectories recorded.

- Different scales: the same data set could include pedestrians walking in a park and people taking cabs from the airport to the city so the change in data points can vary highly.

- Sampling rate: making modeling even more difficult, even short trips can contain hundreds of recordings and long trips might sample very infrequently.

- Size of the data set: the most useful data sets are often the largest. Any viable modeling solution should handle millions of trips with a reasonable turn-over.

Our solution can overcome all these difficulties without the need to compromise on accuracy, privacy or utility. Let me demonstrate.

Generating Synthetic Trajectories

The Porto Taxi data set is a public collection of a year’s worth of trips by 442 cabs running in the city of Porto, Portugal. The records are trips, sequences of latitude/longitude coordinates recorded at 15-second intervals, with some additional metadata (such as driver ID or time of day) that we won’t consider now. There are short and long trips alike and some of the trajectories are missing a few locations so there could be rather big jumps in them.

Given this data, we had our Mobility Engine sift through the trajectories multiple times and learn the parameters of a statistical process that could have generated such a data set. Essentially, our engine is learning to answer questions like

- “What portion of the trips start at the airport?” or

- “If a trip started at point A in the city and turned left at intersection B, what is the chance that the next location is recorded at C?”

You can imagine that if you are able to answer a few million of these questions then you have a good idea about what the traffic patterns look like. At the same time, you would not learn much about a single real individual’s mobility behavior. Similarly, the chance that our engine is reproducing exact trips that occur in the real data set, which in turn could hurt one’s privacy, is astronomically small.

For the case of this post, we trained on 1.5 million real trajectories and then had the model generate synthetic trips. We produced 250’000 artificial trips for the following analysis, but with the same trained model, we could have as easily built 250 million trips.

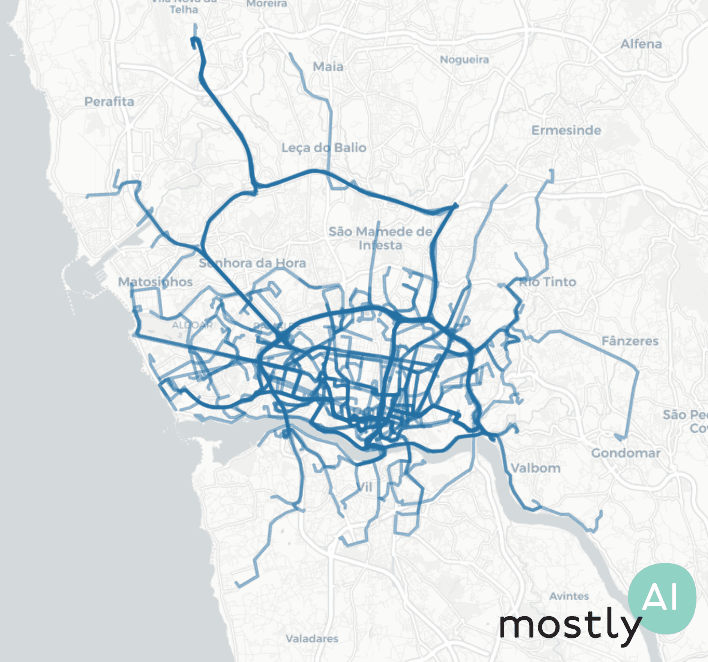

First, for a quick visual, we plotted 200 real trips recorded by real taxi drivers (on the left, in blue) and 200 of the artificial trajectories that our model generated (on the right, in green). As you can see, the overall picture is rather convincing with the high-level patterns nicely preserved.

Looking more closely, we see very similar noise in the real and synthetic trips on the street level.

In general, we keep three main things in mind when evaluating synthetic data sets.

- Accuracy: How closely does the synthetic data follow the distribution of the real data set?

- Utility: Can we get competitive results using the synthetic data in downstream tasks?

- Privacy: Is it possible that we are disclosing some information about real individuals in our synthetic data?

As for accuracy, we compare the real and synthetic data across several location-and trip-level metrics. First, we require the model to accurately reproduce the location densities, the ratio of recordings at a given spatial area, and hot-spots at different granularity. There are plenty of open-source spatial analysis libraries that can help you work with location data such as skmob, geopandas, or Uber’s H3 which we used to generate the hexagonal plots below. The green-yellow-red transition marks how the city center is visited more frequently than the outskirts with a clear hot-spot in the red region.

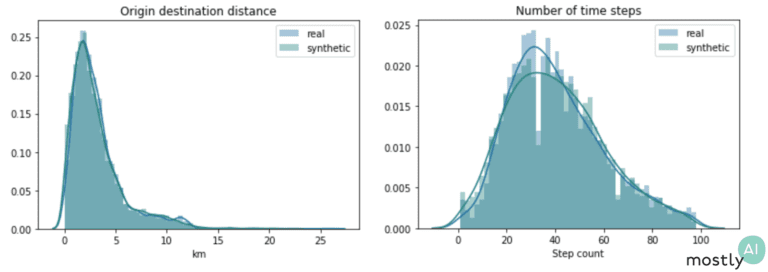

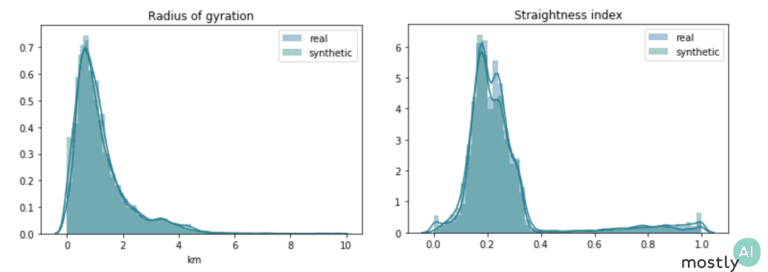

From the sequences of latitude and longitude coordinates, we derive various features such as trip duration and distance traveled, origin-destination distance, and a number of jump length statistics.

The plots above show two of these distributions both for the real and synthetic trips. The fact that these distributions overlap almost perfectly shows that our engine is spot-on at reproducing the hidden relationships in the data. To capture a different aspect of behavior, we also consider geometric properties of paths: the radius of gyration measures how far on average the trip is from its center of mass and the straightness index is the ratio of the origin-destination distance with the full traveled distance. So, for a straight line, the index is exactly 1 and for more curvy trips it takes lower values with a round trip corresponding to straightness index 0. We again see that the synthetic data follows the exact same trends as the real one, even mimicking the slight increase in the straightness distribution from 0.4 to 1. I should stress that we get this impressive performance without ever optimizing the model for these particular metrics and so we would expect similarly high accuracy for other so far untested features.

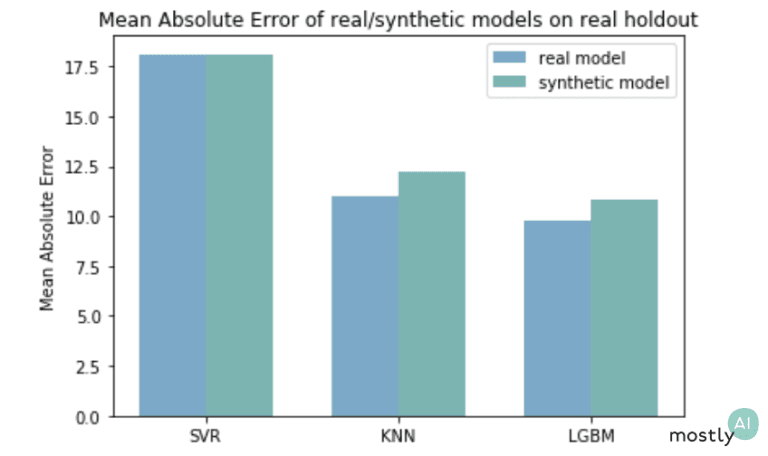

Regarding utility, one way we can test-drive our synthetic mobility data is to use it in practical machine learning scenarios. Here, we trained three models to predict the duration of a trip based on the origin and destination: a Support Vector Machine, a K-nearest neighbor regressor, and an LGBM regressor,

We trained the synthetic models only on synthetic data and the real models on the original trajectories. The scores in the plot came from testing all the models against a holdout from the original, real-life data set. As expected, the synthetically trained models performed slightly worse than the ones that have seen real data but still achieved a highly competitive performance.

Moreover, imagine you are an industry-leading company and shared the safe synthetic mobility data in a competition or hackathon to solve this prediction task. If the teams came up with the above-shown solutions and got the green error rates on the public, synthetic data then you can rightly infer that the winner solution would also do best on your real but sensitive data set that the teams have never seen.

The privacy evaluation of the generated data is always a critical and complicated issue. Already at the training phase, we have controls in place to stop the learning process and thus prevent overfitting. This ensures that the model is learning the general patterns in the data rather than memorizing exact trips or overly specific attributes of the training set. Second, we also compare how far our synthetic trips fall from the closest real trips in the training data. In the plot below, we have a single synthetic trip in red, with the purple trips being the three closest real trajectories, then the blue and green paths sampled from the 10-20th and 50-100th closest real trips.

To quantify privacy, we actually look at the distribution of closest distances between real and synthetic trips. In order to have a baseline distribution, we repeat this calculation using a holdout of the original but so far unused trips instead of the synthetic ones. If these real-vs-synthetic and real-vs-holdout distributions differ heavily, in particular, if the synthetic data falls closer to the real samples than what we would expect based on the holdout, that could indicate an issue with the modeling. For example, if we simply add noise to real trajectories then the latter distributions will clearly flag this data set for a privacy leak. However, the data generated by our Mobility Engine passed these tests with flying colors.

Conclusions

We should be loud about not compromising on privacy, no matter the benefits offered by sharing our personal information. Governments, operators, service providers, and others alike need to take privacy seriously and invest in technology that protects the individual’s rights during the whole life cycle of the data. We at Mostly AI believe that synthetic data is THE way forward for privacy-preserving data sharing. Our new Mobility Engine allows organizations to fully utilize sensitive locational data by producing safe synthetic data at a so-far unseen level of accuracy.

Acknowledgment

This research and development on synthetic mobility data is supported by a grant of the Vienna Business Agency (Wirtschaftsagentur Wien), a fund of the City of Vienna.